Cynthia Dunlop has been writing about software development and testing for much longer than she cares to admit. She’s currently senior director of content strategy at ScyllaDB.

Read more from Cynthia Dunlop

“Great system…now please make it 10 times cheaper.”

That’s not exactly what the ShareChat team wanted to hear after completing a major engineering feat: scaling a real-time feature store 1000X without scaling their database (ScyllaDB). To scale from supporting 1 million features per second to 1 billion features per second, the team has already.

You can read about those performance optimizations in Scaling an ML Feature Store From 1M to 1B Features per Second.

But ShareChat — an Indian leader in a globally competitive social media market — is always looking to optimize. After reaching this scalability milestone, the team received a follow-up challenge: reducing the feature store’s costs by 10X (without compromising performance, of course).

David Malinge, a Sr. Staff Software Engineer at ShareChat, and Ivan Burmistrov, then Principal Software Engineer at ShareChat, shared how they approached this new challenge in a keynote at Monster Scale Summit 2025. Watch the complete talk, or read the highlights below.

About Monster Scale Summit: It is a free, virtual conference on extreme-scale engineering, with a focus on data-intensive applications. Learn from leaders like antirez, creator of Redis; Camille Fournier, author of The Manager’s Path and Platform Engineering; Martin Kleppmann, author of Designing Data-Intensive Applications and more than 50 others, including engineers from Discord, Disney, Pinterest, Rivian, Datadog, LinkedIn, and UberEats. ShareChat will be delivering two talks this year. Register and join us March 11-12 for some lively chats.

ShareChat is one of the largest Indian media networks, with more than 300 million monthly active users. The app’s popularity stems from its powerful recommendation system, which is powered by its feature store.

As Malinge explained at Monster Scale Summit, “[ShareChat processes] more than two billion events daily to compute our features and serve close to a billion features per second at peak, with P99 latency under 20 milliseconds. We also read more than 30 billion rows per day in ScyllaDB, so we’re very happy that we’re not charged by the transaction.”

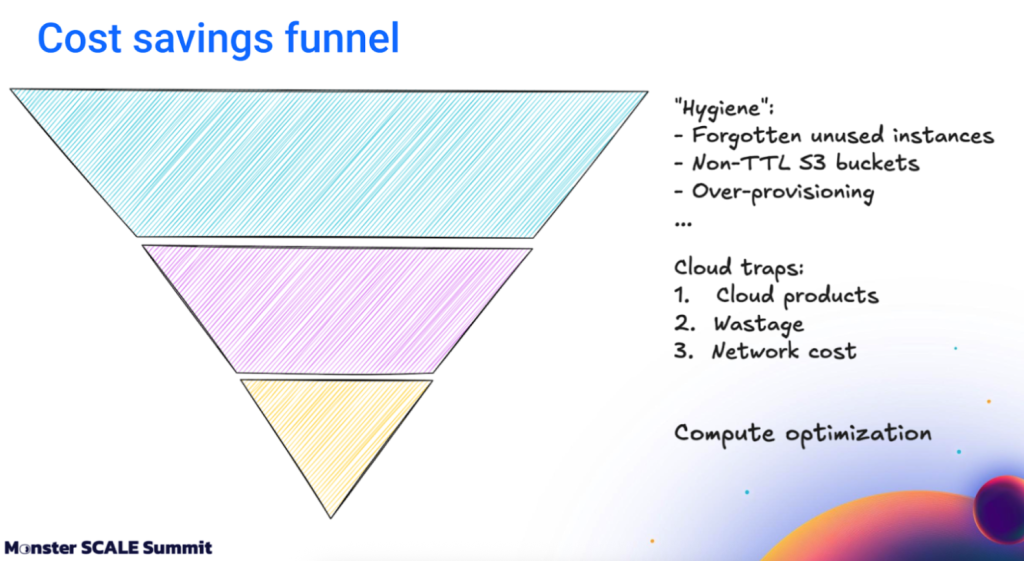

When it came time to make this system 10 times cheaper, the team very quickly realized how complicated and confusing cloud cost optimization could be.

For example, Burmistrov noted these challenges during the Monster Scale Summit:

For the first optimization step, the team became sticklers for hygiene: clearing out anything they didn’t really need. This involved a deep cleaning for forgotten instances and deployments, unused buckets, data without proper TTLs, and overprovisioning. Having proper attribution was critical here.

As Burmistrov explained, “Every workload, every instance, and every resource must be attributed to a specific service and a specific team. Without attribution, cost optimization is a global problem. With attribution, it becomes a local problem.

Once each team can clearly see the costs associated with their own services, optimization becomes much easier. The team that owns the service has both the context and the incentive to improve its cost profile. They can see which components are expensive and decide what to do about them.”

Next, they shifted focus to challenges unique to running applications in the cloud. To get the required cost savings, they had to navigate around a few cloud traps.

The first trap was the allure and ease of using the cloud provider’s own products – particularly,

databases. “They’re great to start with, but at scale they become painful in terms of cost,” explained Burmistrov. Scaling can be particularly costly for solutions that use a metered pricing model (e.g., based on traffic).

“We used several GCP databases, including Bigtable and Spanner, but they became expensive and, more importantly, those costs were largely out of our control; we had little leverage over cost,” continued Burmistrov.

“So we migrated many workloads to ScyllaDB. Today, more than 30 ScyllaDB clusters are deployed across the organization, including for our feature store use case. Besides stability and low latency, ScyllaDB gives us strong cost control. We can choose instance types, merge use cases into a single cluster, and run at high utilization. ScyllaDB works well at 80%, 90%, even close to 100% utilization, which gives us a very strong cost profile.”

Next, the team tackled Kubernetes wastage. In Kubernetes, you deploy containers into pods, but you pay for nodes, which are actual VMs. You typically choose node types upfront – for example, nodes with 4 CPUs and 16 GB of memory. However, over time, workloads change, teams change, and these nodes are no longer optimal. Pods cannot fully occupy the nodes due to CPU, memory, or scheduling constraints. At that point, you’re paying for resources that aren’t being used.

“You may decide, ‘Okay, let’s isolate workloads into separate node pools,’” explained Burmistrov. “But it’s not a solution because, as shown in the image below, we have one node completely wasted.” Simple isolation just moves waste around.

The solution: dynamic node allocation based on workloads. Options include open-source solutions like Karpenter, cloud-specific ones like GKE Autopilot, and commercial multi-cloud solutions like Cast AI.

ShareChat likes Cast AI because it lets them fine-tune the configuration for long-term commitments, spot instances, and other pricing factors.

And then there’s network costs, which the ShareChat team calls “the cloud tax.”

Massive apps like ShareChat deploy across multiple zones for high availability. However, multiple zones need to communicate with one another, and traffic between zones incurs its own cost: Network Inter-Zone Egress (NIZE). That’s the outbound network traffic between availability zones within the same region. It’s billed separately by many cloud providers.

For example, if you deploy ScyllaDB across three GCP zones, with two nodes per zone, and use a non-ScyllaDB driver (not recommended), reads may cross zones both at the client level and within the cluster. For traffic of volume T, you pay for T in NIZE.

ScyllaDB drivers help here. As Burmistrov explained, “ Using ScyllaDB’s token-aware routing removes the extra hop inside the cluster, reducing inter-zone traffic. Using token-aware plus zone-aware routing ensures reads stay within the same zone, reducing inter-zone traffic to zero for reads.” Removing the extra hop cuts NIZE down to about 2/3 of T.

On the write path, it’s a little different because replication is required. Burmistrov continued, “With three zones, writes generate 2 times T of inter-zone traffic. You can trade availability for cost by using two zones instead of three, reducing this to 1.5 times T.

ScyllaDB also lets teams model each zone as a separate data center. In that case, for traffic T, you pay for T of inter-zone traffic. You generally can’t go lower unless you deploy in a single zone.”

Tip: Oracle Cloud and Azure don’t charge for inter-zone traffic. If you use one of these cloud providers, you can deploy across three zones with zero inter-zone cost.

Another challenge: ShareChat’s generally stable read latencies would spike above their SLAs during write-heavy workloads (e.g., a backfill job).

The usual option — just scale up the database — would be simple, but the team wanted to reduce costs. Isolating reads and writes into separate data centers could technically improve performance, but that would be even worse from a cost perspective. That approach would double the infrastructure and probably lead to underutilization.

Ultimately, they discovered and applied ScyllaDB’s workload prioritization. This lets them control how their various workloads compete for system resources.

Malinge explained, “Under the hood, this relies on ScyllaDB’s thread-per-core architecture. Each core runs an async scheduler with multiple queues, each with its own priority — workload prioritization maps directly to these priorities. Looking at the image, we have a very high compute share for serving because we have strong SLAs on the read path, which is user-facing.

Most of the rest goes to less latency-sensitive workloads from asynchronous jobs, such as writing features to the database. We also keep a small share for manual queries (for example, debugging). This enables us to have exactly what we want – different latencies for reads and writes – and that allowed us to have the best possible serving for our features.”

That serving layer is a distributed gRPC (Google Remote Procedure Call) service that handles and caches consumer requests. The team made some cost optimizations here, too.

One of the most impactful was a clever shortcut: instead of fully deserializing complex Protobuf messages, they treated repeatedmessages as serialized byte blobs. Since Protobuf encodes embedded messages using the same wire format as bytes, they could simply append these serialized records to merge data.

With that, they could skip the heavy lift of fully unpacking and rebuilding the messages from scratch. That optimization alone could be the subject of an entire article; please see the video (starting at 18:15) for a detailed walkthrough of their approach.

Malinge’s top lesson learned from optimizing this layer:

“The first advice is the Captain Obvious one: don’t go blindly. We set up continuous profiling very early on. Nowadays, there are tons of tools available. Integration is super straightforward. You might recognize the vanilla GCP profiler in the image below. It’s just four lines of code to add to Go programs, and it’s really helped us on our quest to reduce costs. We’ve actually unlocked more than a 50% reduction in compute by doing optimizations guided by continuous profiling.”

Another lesson learned: if you’re using protobuf at high RPS, serde is probably burning CPU. Even with ~95% cache hit rates, the hot path was stilldeserializing protos, merging them, and serializing them again just to stitch responses together. Most of that work was pointless.

The team ended up leveraging protobuf’s wire format, where repeated fields are just sequences of records and embedded messages can be treated as raw bytes (no deserialization). They switched to this “lazy” deserialization and merged cached protos without ever touching individual fields. For a practical example, see this repository david-sharechat/lazy-proto.

And one parting tip: the wins compound fast. Benchmarking showed lazy merging was about 6 times faster, with about a third of the allocations. In production, that meant lower compute bills and better tail latency.

The team’s smart work here underscores that cost optimizations don’t have to compromise the product. They can actually act as a catalyst for better system design.

Malinge left us with this: “Our cost savings initiatives actually led to a lot of innovations. As I tried to represent in the left quadrant, there is a very unhealthy way to look at cost savings – one that focuses on shortcuts that negatively impact your product. The key is to stay on the right side of this quadrant, to aim for the sweet spot of reducing costs while improving the product.”

YOUTUBE.COM/THENEWSTACK

Tech moves fast, don’t miss an episode. Subscribe to our YouTube

channel to stream all our podcasts, interviews, demos, and more.

Source: thenewstack.io…